Moving from Evidence-Based Programs to Evidence-Based Practices

October 9, 2017

The Forum for Youth Investment recently brought together senior career federal government staff and leaders from nongovernmental organizations to hear about two promising approaches that utilize evidence-based practices as opposed to evidence-based programs. Supported by the W.T. Grant and Annie E. Casey Foundations, the event included multiple presentations and a roundtable discussion designed to facilitate dialogue about this approach’s applicability to other policy areas and any potential challenges in implementing it.

By practices, the Forum means the individual activities inside the ‘black box’ of a program that cause it to be successful. This can be certain behaviors that staff are supposed to replicate, certain structures that need to be in place or certain levels of ‘dosage’ for particular interventions. A practices-based approach allows for local policymakers to use evidence to ensure that their homegrown program is effective even if they are unable to find an evidence-based program that fits their population or environment, or have limited capacity to implement a Randomized Controlled Trial (RCT)- backed program.

A practices-based approach ensures policymakers can maintain quality while ensuring flexibility. It starts by having researchers extract ‘what works’ across various programs in a given policy area. They can then deconstruct what works into information that can be used by existing homegrown programs. Intermediaries can then work with program administrators to implement these practices by developing tools or protocols to assess:

- how their program incorporates evidence-based practices,

- their program participants’ needs,

- the ‘fit’ of their program to their participants,

- their capacity to implement evidence-based practices with staff training and recruitment, and

- outcomes after successful implementation.

What’s exciting about this approach is its potential to improve results at a much greater scale by providing research insights into certain challenges faced by decision-makers. The Center for the Study of Social Policy, as part of their Friends of Evidence group, recently noted that this approach can help:

- Create coherent, manageable systems based on a consistent set of principles, rather than a disconnected set of unrelated programs;

- Build the capacity of front-line practitioners;

- Advance equity by reaching a broader, more diverse range of people who can benefit from effective approaches by going beyond the specific sub-populations for which particular interventions may have been tested;

- Efficiently allocate resources, particularly with regard to decisions about “home-grown” approaches that incorporate many of the elements of evidence-based programs but that have not been formally evaluated; and

- Establish mechanisms to continually learn from experience and improve performance.[i]

The approach translates the latest evidence into actionable insights for policymakers. By supporting practitioners and ensuring that their programs are utilizing the right practices, researchers are ultimately empowering practitioners to use evidence as a tool for program improvement.

The event highlighted two practices-based approaches in juvenile justice and out-of-school time interventions. In the juvenile justice space, Dr. Mark Lipsey with Vanderbilt University used a meta-analysis (essentially, a research study of research studies) to identify what kind of approaches are most likely to be effective in reducing recidivism for youth. After reviewing 548 studies collected over 40 years, Lipsey was able to distinguish the key characteristics found in effective programs. These characteristics included targeting high-risk offenders, a therapeutic program philosophy and a sufficient dosage of the intervention. Shay Bilchik, director of the Center for Juvenile Justice Reform at Georgetown University, then used this study to develop new tools to assess programs (brand-name or home-grown) on their inclusion of these identified best practices. The tools allow policymakers to understand whether or not their current programs (each with their own set of practices) are effective, but also to ensure that youth are matched to the program that will be most effective in supporting them. The Office of Juvenile Justice and Delinquency Prevention at the U.S. Department of Justice is now supporting this work in communities across the country through their Juvenile Justice Reform and Reinvestment Initiative (JJRRI).

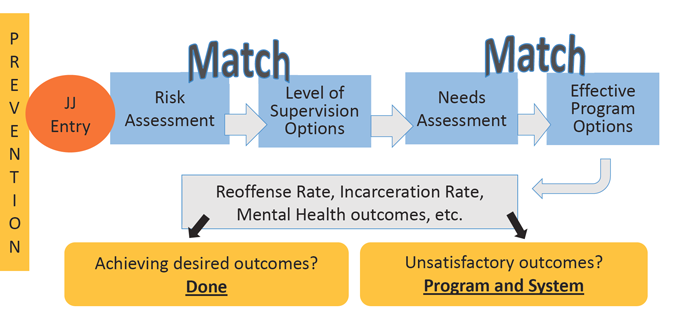

What researchers have done is give program managers a tool to assess their program and its components. They can look at other similar programs that have the same components and evidence of effectiveness. Program managers can assess whether their practices match the effective program with the same components. The figure below helps explain how this process supports practitioners across the country.

When juveniles first enter a program they are given a risk assessment and needs assessment to match them with the appropriate level of supervision and most effective program options. This matching occurs because of the research Dr. Lipsey compiled which shows which levels of supervision and program options are most effective for which youth. Practitioners can collect data on various outcome measures and use this data to see if their intervention was effective or not. They may make changes to improve their juvenile justice system as needed.

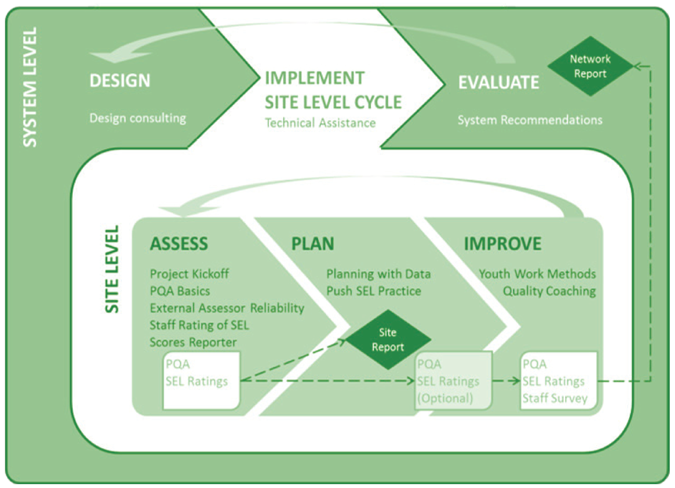

The Forum’s Weikart Center for Youth Program Quality approaches quality improvement for the afterschool space in a similar way. Led by Dr. Charles Smith, the center used input from expert practitioners and a survey of identified exemplary programs to understand and articulate the best practices for high quality out-of-school time programs. The center then developed a set of standards for various youth development domains and identified different experiences that led to growth in these domains. They then created a tool to help programs assess, plan, and improve their practices by incorporating these experiences into their program activities. By identifying key practices of successful afterschool programs, the Weikart Center was able to build and support a continuous quality improvement process that works in the wide variety of settings within the afterschool field. By incorporating a practices-based, rather than a program-based approach, they are now more capable of scaling evidence-based interventions within the field.

The figure below explains the process that the Weikart Center uses when interacting with programs on the ground. The center uses training and technical assistance to implement their continuous quality improvement process. Essentially, the center trains the trainers. They work with staff at local sites to understand the process of continuous quality improvement and the specific evidence-based practices they need to see in each of their classrooms. These staff can then execute an improvement process based on how well their staff implement the practices. The goal, like the juvenile justice example above, is to use research to empower practitioners. By clarifying what is and is not evidence-based and implementing a process to continually assess what is happening in each classroom, the Weikart Center helps local afterschool programs improve their efforts through the use of evidence.

Attendees were excited to hear about these two examples of a practices-based approach and had questions about the best way to get initiatives like these off the ground. One of the first issues discussed was staffing. In both of these vignettes, the staff who are trained in these evidence-based practices can have varying education or training backgrounds. This is also true for other evidence-based fields such as home visiting programs. The presenters noted that a practices-based approach helps motivate and empower staff through greater expectations in the initial hiring process and more targeted training once they are employed.

Other questions concerned the costs for upfront planning. Attendees asked whether this approach would require additional resources to support understaffed agencies, train employees in a new way of doing business, and ensure accountability for meeting desired outcomes. The presenters noted that a lot of this work is about repurposing and refocusing current efforts. The attendees heard from Sonia Johnson, Oklahoma’s Executive Director for Family & Community Engagement at the State Department of Education, who noted that her agency’s switch to this approach was already covered by federal funding and allowed the state to create an improvement process for both their urban and rural programs – which face dramatically different circumstances. Essentially, the approach was about using the resources they had better as opposed to asking for more.

The Forum ended the meeting by suggesting that this approach should expand to other policy contexts. Evidence-based policymaking is increasingly popular, particularly as the Commission on Evidence-based Policymaking releases their report. With budgets already tightening, it is important that policymakers look to use available resources more efficiently. An evidence-based practices approach allows policymakers to incorporate evidence into their work in a way that focuses practitioners on continuous improvement instead of accountability. Policymakers should consider how evidence-based practices could spur innovation in other fields.

[i] Center for the Study of Social Policy, “Better Evidence for Decision-Makers: Emerging Pathways from Existing Knowledge.” 2017. https://cssp.org/policy/body/Better-Evidence.pdf